Below is the CKS exam-ready, step-by-step solution for QUESTION 15.

Follow exactly in this order. No extra changes.

QUESTION 15 --- Istio mTLS (EXAM MODE)

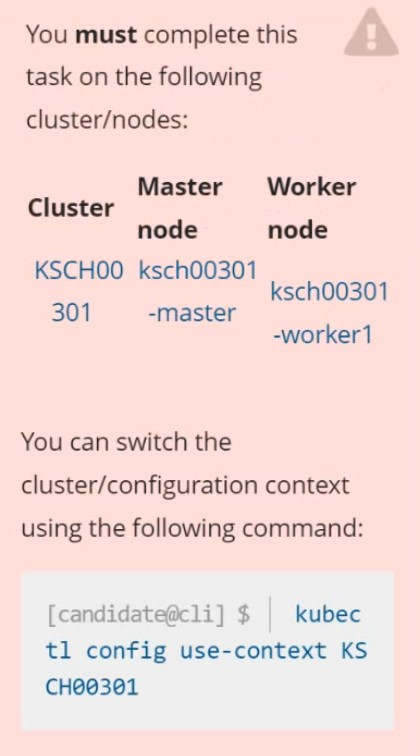

1) Connect to the correct host

ssh cks000041

sudo -i

export KUBECONFIG=/etc/kubernetes/admin.conf

2) Ensure sidecar injection is enabled for the mtls namespace

2.1 Check current namespace labels

kubectl get ns mtls --show-labels

2.2 Enable automatic Istio sidecar injection

kubectl label namespace mtls istio-injection=enabled --overwrite

Verify:

kubectl get ns mtls --show-labels | grep istio-injection

Expected:

istio-injection=enabled

3) Ensure ALL Pods get the istio-proxy sidecar

Existing Pods will not get sidecars automatically.

You must restart workloads in the namespace.

3.1 Restart all Deployments in mtls

kubectl -n mtls rollout restart deployment

3.2 Verify Pods now have 2 containers (app + istio-proxy)

kubectl -n mtls get pods

Then check one Pod:

kubectl -n mtls get pod -o jsonpath='{.spec.containers[*].name}{'\n'}'

Expected output includes:

istio-proxy

4) Configure mutual TLS (mTLS) in STRICT mode

4.1 Create a PeerAuthentication for the mtls namespace

cat <<EOF | kubectl apply -f -

apiVersion: security.istio.io/v1beta1

kind: PeerAuthentication

metadata:

name: mtls-strict

namespace: mtls

spec:

mtls:

mode: STRICT

EOF

5) Verify mTLS policy is applied

kubectl -n mtls get peerauthentication

kubectl -n mtls describe peerauthentication mtls-strict

Expected:

Mode: STRICT

6) Final verification (exam confidence check)

6.1 Confirm all Pods are Running

kubectl -n mtls get pods

6.2 Confirm sidecar injection everywhere

kubectl -n mtls get pods -o jsonpath='{range .items[*]}{.metadata.name}{' -> '}{.spec.containers[*].name}{'\n'}{end}'

Each line must include istio-proxy.